AI and High-Performance Computing (HPC) demand fast networks. They carry huge data volumes. 400 Gigabit Ethernet (400GbE) and 800 Gigabit Ethernet (800GbE) optics are key. For NVIDIA AI Clusters, network speed is vital. GPU-to-GPU and GPU-to-storage links must be rapid. Choosing the right optic form factor is critical.

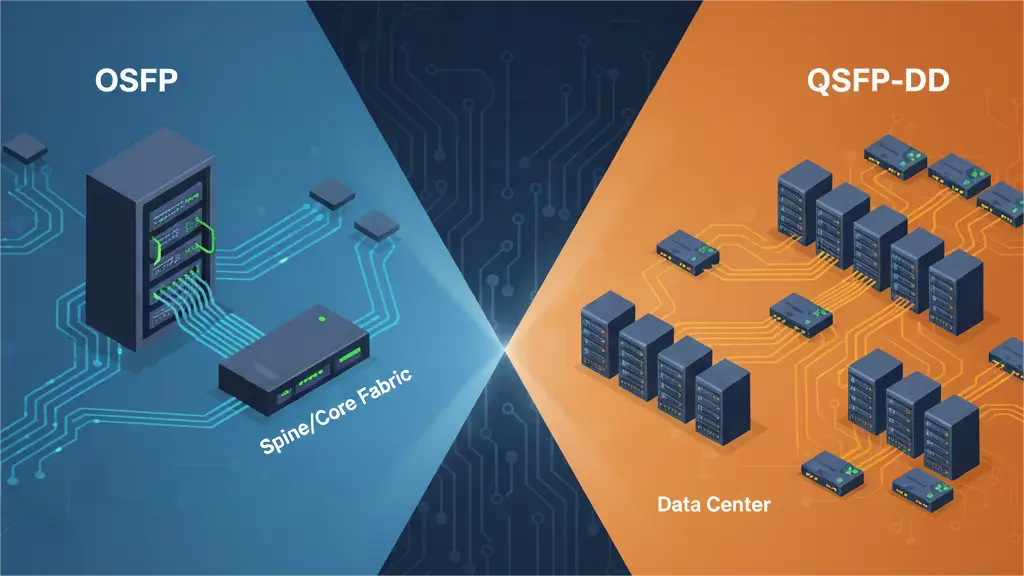

OSFP (Octal Small Form-factor Pluggable) and QSFP-DD (Quad Small Form-factor Pluggable Double Density) lead the 400G/800G market. Both offer high bandwidth. Yet, they have distinct advantages. These impact rack density, thermal management, and future scalability. This guide explores OSFP vs QSFP-DD. It helps network architects choose for demanding AI environments.

The Rise of 400G and 800G in NVIDIA AI Clusters

NVIDIA’s latest GPUs need fast networks. The H100 Tensor Core GPU and DGX H100 are examples. Their NICs, like the ConnectX-7 DPU, push to 400Gb/s and higher. Quantum-2 InfiniBand or Spectrum-X Ethernet switches also support these speeds. Optical interconnects must match these rates. This prevents network bottlenecks. It ensures GPUs receive data consistently.

Why Form Factor Matters for AI

- Rack Density: AI clusters are often very dense. Optic size affects port count on a switch.

- Thermal Management: High bandwidth means high power and heat. Good thermal design ensures reliability. This is vital in hot AI racks.

- Power Consumption: AI data centres need huge power. Energy efficiency in optics is key.

- Future-Proofing: Compatibility with future speeds (e.g., 1.6T) matters. This influences today’s design.

QSFP-DD: The Double-Density Evolution

QSFP-DD is an evolution of QSFP, QSFP+, and QSFP28. “DD” means “Double Density.” It adds a second row of electrical contacts. This doubles the electrical lanes from 4 to 8.

- Key Characteristics:

- Dimensions: It is compact. Its size is similar to previous QSFP generations.

- Electrical Lanes: It has 8 lanes. Each supports 50Gb/s (PAM4) for 400G. For 800G, each supports 100Gb/s (PAM4).

- Power Consumption: It generally uses less power than OSFP. This is due to its smaller size.

- Backward Compatibility: It is electrically backward compatible. It works with QSFP28 (100G) and QSFP56 (200G) modules. This allows mixed deployments. Upgrades are easier.

- MSA: The QSFP-DD MSA (Multi-Source Agreement) defines it.

- Advantages for NVIDIA AI Clusters:

- High Port Density: Its compact size allows many ports. Up to 36 or 72 QSFP-DD ports fit on a 1U switch. This maximises density in NVIDIA’s DGX systems. It also applies to InfiniBand/Ethernet switches.

- Established Ecosystem: It uses a mature and widely deployed QSFP ecosystem. This means broader vendor support. It can lead to competitive pricing.

- Backward Compatibility: This simplifies migration. Existing 100G/200G NVIDIA networks benefit. It allows gradual upgrades.

OSFP: Optimised for Power and Future Growth

OSFP is a new form factor. It targets 400G, prioritises power dissipation. It also focuses on future scalability. It is slightly wider and longer than QSFP-DD.

- Key Characteristics:

- Dimensions: It is slightly larger than QSFP-DD. This allows better heat dissipation.

- Electrical Lanes: It has 8 lanes. Each supports 50Gb/s (PAM4) for 400G. For 800G, each supports 100Gb/s (PAM4).

- Power Consumption: It handles higher power. This is common for complex optical engines. Such engines are needed for longer reach or higher speeds.

- No Backward Compatibility: It is not backward compatible. It doesn’t work with QSFP-series modules.

- MSA: The OSFP MSA (Multi-Source Agreement) defines it.

- Advantages for NVIDIA AI Clusters:

- Superior Thermal Management: Its larger size offers more surface area. It has more internal volume for heatsinks. This dissipates heat more effectively. This is crucial for high-power AI applications. Sustained performance is vital. Rack temperatures can be challenging.

- Future Scalability: Its design is adaptable. It fits future power and cooling needs. This includes speeds like 1.6T. It can be a “future-proof” choice for bleeding-edge NVIDIA deployments.

- High Power Module Support: It suits higher power optical modules. This includes those for longer-reach single-mode applications (e.g., DR4/FR4).

OSFP vs QSFP-DD for NVIDIA AI Clusters: Making the Choice

Here’s a comparative breakdown. It aids your decision for an NVIDIA-powered AI network:

| Feature | QSFP-DD | OSFP | Impact on NVIDIA AI Cluster |

| Size/Footprint | More compact. Similar to QSFP28. | Slightly larger. Wider and longer. | Rack Density: QSFP-DD allows higher port density. Many ports fit on a switch faceplate. This is key for dense NVIDIA DGX clusters. It helps Top-of-Rack switches. OSFP has a slightly lower density. It may simplify cabling. |

| Thermal Mgmt. | Good, but size constraints exist. | Excellent. Larger heatsink area. | Sustained Performance/Reliability: OSFP dissipates heat better. This is good for hot AI racks with powerful GPUs. It offers better reliability for high-power optics. This prevents thermal throttling. |

| Power Handling | Up to ~12-14W for 400G. Can go higher for 800G. | Up to ~18W+ for 400G. Designed for higher power. | Energy Efficiency & Capability: OSFP handles more power. QSFP-DD uses less power per port. This can be more energy-efficient for the cluster. This applies to typical short/medium-reach AI. OSFP supports more advanced optics. |

| Electrical Lanes | 8x50G (400G), 8x100G (800G). | 8x50G (400G), 8x100G (800G). | Bandwidth Scaling: Both support identical electrical lanes. They enable 400G and 800G speeds. Similar electrical signalling is used. There is no inherent bandwidth advantage. |

| Backward Comp. | Electrically compatible with QSFP28/56. | None. | Upgrade Path: QSFP-DD simplifies migration for NVIDIA networks. This includes those using 100G/200G QSFP28/56 modules. Switches accept older modules. This offers flexibility during upgrades. OSFP needs a full module change. |

| Ecosystem/Adoption | Broader adoption. Wider vendor support. | Growing. Less widespread than QSFP-DD. | Supply Chain & Pricing: QSFP-DD has a mature ecosystem. This can lead to better availability and pricing. This applies to standard modules. OSFP adoption is strong. It’s especially true with certain switch vendors. |

| NVIDIA Specifics | Used in NVIDIA ConnectX DPUs and some Spectrum-X switches. | Used in NVIDIA Quantum-2 InfiniBand switches and some DGX systems. | Vendor Alignment: NVIDIA’s product choices impact yours. IB switches favour OSFP. DPU/Ethernet switches often use QSFP-DD. Your choice often depends on your specific NVIDIA hardware. Always check equipment specs. |

| Future Scalability | Limited potential for higher power/size. | Better potential for 1.6T+. | Long-Term Investment: For AI labs beyond 800G, OSFP offers a robust platform. It suits future optics needing more power and cooling. |

PHILISUN’s Solutions for Both OSFP and QSFP-DD in NVIDIA AI Clusters

PHILISUN understands the needs of NVIDIA AI cluster architects. They need flexibility, reliability, and cost-effectiveness. We offer a full portfolio of 400G and 800G optics. These include transceivers, AOCs, and DACs. Both QSFP-DD and OSFP form factors are available.

- 100% Guaranteed Compatibility: Our modules are tested. We use actual NVIDIA hardware. This ensures seamless operation. Full compatibility is confirmed.

- Full Range of Standards: We have solutions for all common reaches and fibre types. This includes 400GBASE-SR8, DR4, FR4. It also covers 800G variants for Ethernet. NDR InfiniBand modules are available.

- Cost-Effective Alternatives: Achieve big savings. Our solutions cost less than OEM. Performance and reliability remain high.

- Expert Support: Our technical team knows NVIDIA AI networking. We help you choose the best OSFP or QSFP-DD solution. This fits your specific deployment.

Examples of PHILISUN’s NVIDIA-Compatible OSFP/QSFP-DD Products

- PHILISUN 400G QSFP-DD SR8: Great for short-reach (100m) multimode. It connects within racks. It also works for adjacent racks in an NVIDIA DGX pod.

- PHILISUN 400G QSFP-DD AOC: A high-performance, low-latency AOC. It’s for ultra-dense intra-rack or inter-rack links. It helps future-proof NVIDIA systems.

Conclusion

No single “winner” exists in the OSFP vs QSFP-DD debate. Your choice depends on your specific needs. This includes network topology and hardware. It covers NVIDIA switches/NICs, density, and thermal strategy.

- QSFP-DD is strong if you need max port density. It’s also good for backward compatibility. This is especially true with NVIDIA’s ConnectX DPUs and Ethernet switches.

- OSFP has compelling advantages if you need superior thermal management. This applies to high-power optics. It also offers future scalability towards 1.6T+. This aligns with NVIDIA’s InfiniBand Quantum-2 switches.

Your decision should align with your NVIDIA hardware. PHILISUN offers solutions for both. We provide robust, tested, and cost-effective OSFP and QSFP-DD interconnects. They help your NVIDIA AI Cluster reach its full potential.