NVIDIA H800 vs H100: A Technical Deep Dive into Politically-Defined AI Chips

The NVIDIA H100 Tensor Core GPU is based on the groundbreaking Hopper architecture. It stands as the undisputed champion for accelerating large language models (LLMs), generative AI, and next-generation high-performance computing (HPC). Its prowess defines the cutting edge of AI development.

However, the global landscape of advanced technology is increasingly influenced by geopolitical factors. Due to stringent export controls on high-performance computing chips, NVIDIA introduced the H800 Tensor Core GPU—a modified variant of the H100 specifically designed for certain restricted markets.

The H800 is not a technical evolution or a parallel product line in the traditional sense; it is a direct consequence of compliance requirements. This article will provide a rigorous technical analysis to dissect the specific performance vectors where the H800 is constrained and the downstream impact on real-world AI and HPC workloads.

H100 and H800: Same Silicon, Different Capabilities

Fundamentally, both the H100 and H800 are derived from the same silicon die. And they are fabricated on TSMC’s advanced 4N process and built upon the formidable Hopper architecture. They share the same transistor count (80 billion) and the foundational CUDA core and Tensor Core arrays. However, to meet specific throughput per chip and interconnect speed restrictions defined by export regulations, NVIDIA implemented precise technical modifications, primarily impacting inter-GPU communication bandwidth and double-precision floating-point performance.

| Feature | NVIDIA H100 (SXM / PCIe) | NVIDIA H800 | Key Technical Difference |

| Architecture | Hopper | Hopper | No intrinsic architectural difference |

| Manufacturing Process | TSMC 4N | TSMC 4N | Identical |

| Transistor Count | 80 Billion | 80 Billion | Identical |

| Memory (HBM3/HBM3e) | 80GB (SXM) / 94GB (SXM) | 80GB (SXM) / 94GB (SXM) | Memory capacity and bandwidth (e.g., 3.35 TB/s) largely consistent |

| AI Compute (FP8 / FP16) | Peak theoretical compute power consistent | Peak theoretical compute power largely consistent | Core AI inference & training compute remains high |

| NVLink Interconnect Bandwidth | 900 GB/s (SXM, total aggregate) / 600 GB/s (single link, bidirectional) | 400 GB/s (SXM, total aggregate) / 300 GB/s (single link, bidirectional) | Inter-GPU bandwidth severely restricted |

| Double Precision (FP64) Compute | 60 TFLOPS | 1 TFLOPS | HPC performance drastically reduced |

| Target Market | Global (excluding restricted regions) | Restricted markets (e.g., Mainland China) | Market segmentation driven by policy |

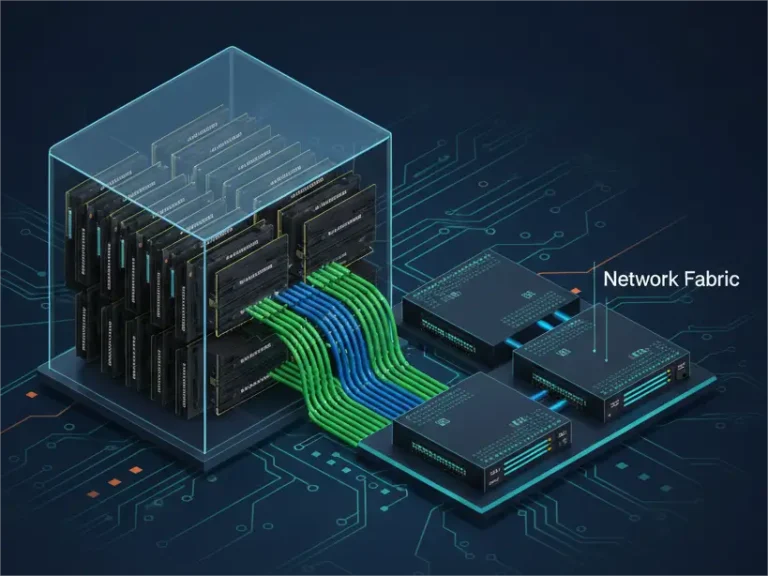

NVLink Bandwidth Reduction: Impact on Multi-GPU Scalability

NVIDIA’s NVLink is a cornerstone technology for high-performance, low-latency, point-to-point GPU interconnectivity. Its role is paramount in accelerating multi-GPU training within a single server and across distributed clusters.

- H100 (Unrestricted): The H100 SXM variant features 18 NVLink 4.0 links, providing a staggering 900 GB/s of total aggregate bidirectional bandwidth. Each individual link offers 50 GB/s, enabling complex all-to-all communication patterns among 8 GPUs in an HGX system without relying solely on PCIe or external networking. This bandwidth is crucial for efficient data exchange during model parallelism, data parallelism, and gradient synchronization in massive LLM training.

- H800 (Restricted): In compliance with export regulations, the H800’s NVLink interconnect bandwidth is significantly reduced to 400 GB/s total aggregate bidirectional bandwidth. This means the individual NVLink links are effectively clocked at a lower speed (approximately 25 GB/s bidirectional per link). And it resulted in a 55% reduction in total aggregate bandwidth compared to the H100.

Technical Impact Analysis:

- Increased Communication Latency: For compute-bound workloads, especially those requiring frequent data synchronization (e.g., large batch sizes, complex model architectures, optimizer states in deep learning), the reduced NVLink bandwidth translates directly into higher inter-GPU communication latency.

- Diminished Multi-GPU Scaling Efficiency: The efficiency gains from scaling out to multiple GPUs are heavily dependent on the communication fabric. With half the NVLink bandwidth, an H800-based HGX system will experience a bottleneck earlier than an H100 system, potentially leading to a plateau in performance scaling or requiring longer training times for the same number of epochs.

- Data Movement Bottleneck: Training models with billions or trillions of parameters generates vast amounts of data (e.g., weights, activations, gradients) that must be exchanged between GPUs. The H800’s restricted NVLink speed will inevitably slow down these data movements, impacting overall training throughput.

Double Precision (FP64) Compute: Targeting HPC Capabilities

Beyond AI workloads, the H100 is also a powerhouse for traditional High-Performance Computing (HPC) applications. The H800, however, sees a drastic cut in this specific capability.

- H100: Boasts a formidable 60 TFLOPS of peak theoretical FP64 (double-precision floating-point) compute performance. This is critical for scientific simulations in fields like molecular dynamics, fluid dynamics, climate modeling, and nuclear physics, where high precision is non-negotiable.

- H800: The FP64 compute performance of the H800 is severely constrained to a mere 1 TFLOPS.

Technical Impact Analysis:

- Effective Disqualification for Core HPC: This profound reduction effectively disqualifies the H800 for most traditional HPC applications that rely on high-fidelity FP64 calculations. It transforms the H800 into a GPU primarily optimized for AI training and inference at lower precisions (FP16, BF16, FP8).

- Strategic Compliance: This specific limitation underscores the intent of the export controls: to prevent restricted regions from leveraging state-of-the-art hardware for general-purpose scientific supercomputing, which often has dual-use implications. The H800 is specifically tailored to meet the letter of these regulations.

Operational Considerations for H800 Deployments

For data center operators and AI researchers working with H800 systems, these technical limitations translate into direct operational consequences.

- Increased TCO for Equivalent Performance: To achieve the same training throughput for large-scale models as an H100 cluster, an organization might need to deploy a significantly larger number of H800 GPUs. This increases the total cost of ownership (TCO) through higher capital expenditure on hardware, increased power consumption, and more complex cooling infrastructure per performance unit.

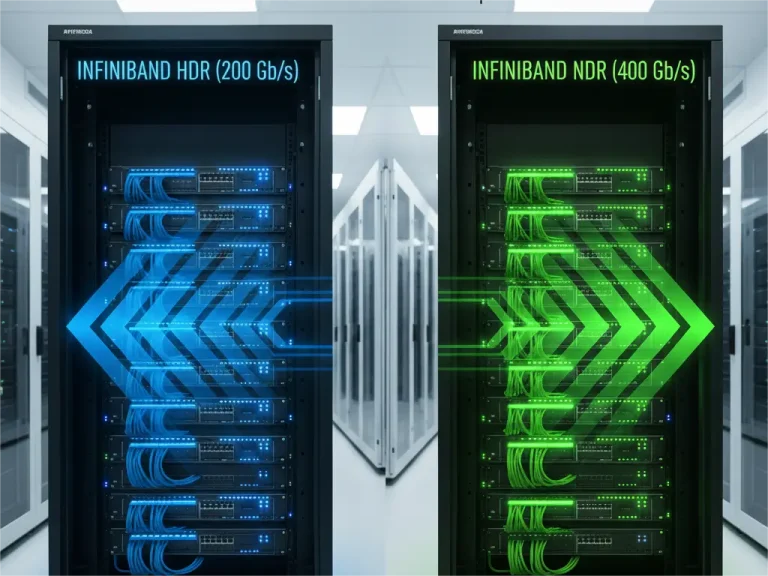

- Networking Architecture Remains Critical: Even with reduced internal GPU bandwidth, the need for robust external networking for multi-node communication is unchanged. High-speed InfiniBand (e.g., NDR 400Gb/s) or high-bandwidth Ethernet (e.g., 400GbE) remains essential to connect HGX H800 servers, enabling efficient data transfer between nodes and preventing external networking from becoming an even greater bottleneck.

- PHILISUN’s Role: It is crucial that these external interconnects are built with reliable, high-performance optical transceivers and cables. PHILISUN offers a comprehensive portfolio of 200G/400G InfiniBand and Ethernet optical transceivers and DAC/AOC cables, designed to meet the demanding latency and throughput requirements of even the most constrained H800 AI clusters, ensuring that the external network does not compound the internal NVLink limitations.

- Software Optimization Challenges: Developers may need to employ more aggressive communication-avoiding algorithms or optimize their code to minimize inter-GPU data transfers, working around the NVLink limitations.

Why PHILISUN is Your Trusted Partner for High-Performance AI Interconnects

In the demanding world of AI and HPC, where every nanosecond and every gigabit counts, the quality and reliability of your networking components are paramount. This is especially true when optimizing constrained systems like the H800.

As a professional provider of high-quality optical transceivers and cabling solutions, PHILISUN specializes in high-speed interconnects. Our commitment is built on:

- Uncompromising Quality: We utilize only premium-grade components and adhere to stringent manufacturing standards, ensuring our optical transceivers deliver consistent, error-free performance.

- Guaranteed Compatibility: All PHILISUN optical transceivers undergo rigorous testing in real-world network equipment from major OEMs. Our precise coding ensures seamless compatibility and reliable operation, eliminating integration headaches.

- Expert Support: Our team possesses deep technical expertise in data center networking and AI interconnects, providing invaluable guidance to help you design and optimize your infrastructure.

Whether you are deploying H100 or H800, PHILISUN‘s optical transceivers provide the robust, high-bandwidth foundation necessary to maximize your GPU investment.

Conclusion

The NVIDIA H800 is a testament to the complex interplay between technological innovation and geopolitical strategy. From a purely technical standpoint, it is a performance-compromised version of the H100.

- The H100 represents the full, unadulterated power of the Hopper architecture for both cutting-edge AI and demanding HPC workloads.

- The H800 retains strong capabilities for core AI training and inference at lower precisions but is notably restricted in its multi-GPU scaling efficiency (NVLink bandwidth) and general scientific computing (FP64 performance).

For users, deploying H800 means consciously accepting these performance ceilings. While it can still deliver substantial AI acceleration, the trade-offs in scaling efficiency and HPC capabilities are significant and must be factored into hardware procurement, cluster design, and workload planning.

For any high-performance AI deployment, the underlying network infrastructure—particularly the optical transceivers for external interconnectivity—must be meticulously planned to complement the GPUs’ capabilities. PHILISUN understands these critical demands and offers optimized solutions to ensure your AI infrastructure performs at its peak.

Accelerate Your AI and HPC Infrastructure:

Explore PHILISUN’s High-Performance 200G/400G InfiniBand/Ethernet Optical Transceivers!