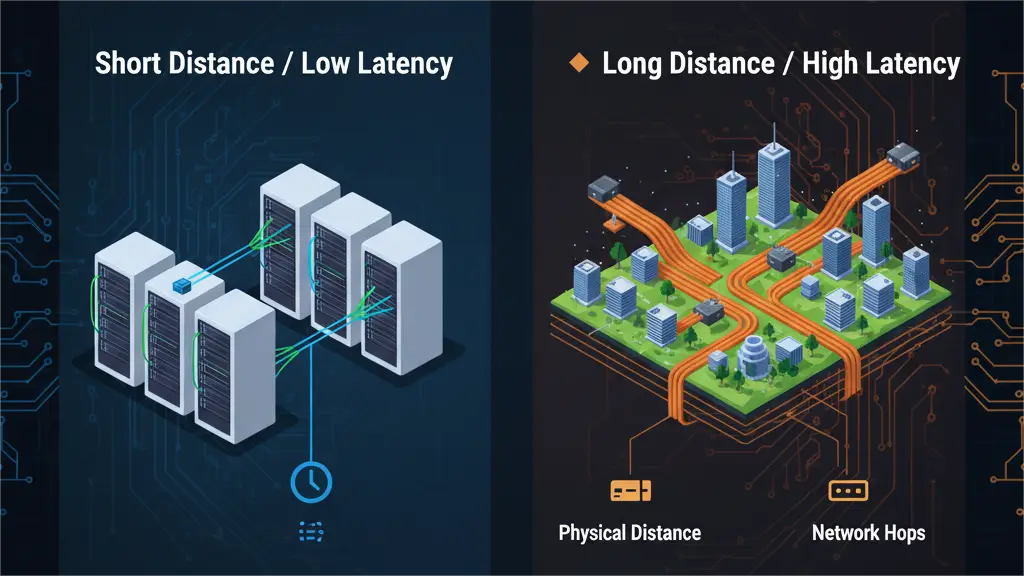

In the world of high-speed data, every nanosecond counts. For some applications, network speed is not just about bandwidth. It is about latency. Latency is the delay data experiences. It is the time it takes for a signal to travel from point A to point B.

You might think light is instant. It is incredibly fast. But even light has a finite speed. And when it travels through fiber optic cables and network devices, it slows down. This “lag” is critical for many businesses.

This guide will dive deep into fiber optic latency. We will explore its causes, the physics behind it, and examine how different components contribute to the delay. Understanding latency is vital for High-Frequency Trading (HFT), Artificial Intelligence (AI) clusters, and high-performance computing (HPC). We will show how choosing the right PHILISUN Optical Transceivers can help minimize this critical delay.

The Physics: Light in a Vacuum vs. Light in Glass

Light travels at its fastest in a perfect vacuum. This is the ultimate speed limit of the universe.

1. Speed of Light in a Vacuum:

- In a vacuum, light travels approximately 299,792,458 meters per second.

- This is roughly 30 centimeters per nanosecond.

- So, a signal could cross 300 meters in just 1 microsecond.

2. The Impact of Refractive Index (Light in Glass):

- Fiber optic cables are made of glass (or plastic). When light enters glass, it slows down.

- The refractive index measures this slowing. Glass has a refractive index of about 1.45 to 1.50 for standard fiber.

- The Result: Light travels about 30% slower in fiber than in a vacuum.

- Practical Speed: In a fiber, light travels roughly 200,000,000 meters per second. This translates to approximately 5 nanoseconds per meter of fiber.

- Example: A 1-kilometer (1000-meter) fiber link will introduce approximately 5 microseconds of propagation delay.

This propagation delay is the most fundamental part of fiber optic latency. It is purely physical. You cannot eliminate it. But you can minimize it by choosing the shortest possible fiber path.

The Hardware Factor: Transceiver Latency

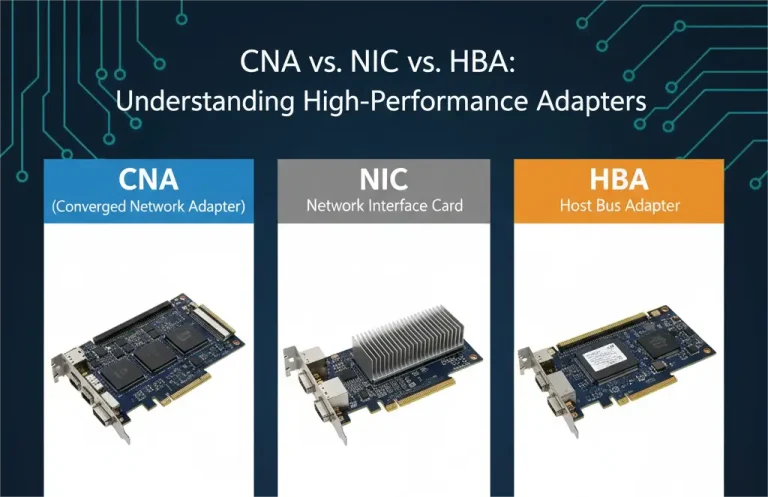

Beyond the fiber itself, network hardware adds its own delays. Optical Transceivers are key here.

1. O-E-O Conversion (Optical-Electrical-Optical):

- Fiber transceivers (like PHILISUN SFP+ or QSFP28 modules) convert electrical signals to light and back again.

- This conversion process takes a small amount of time. It involves lasers, photodiodes, and electrical circuitry.

- This O-E-O conversion latency is typically very small. It can range from a few nanoseconds to tens of nanoseconds per transceiver pair.

2. Forward Error Correction (FEC): The Latency Tax of High Speed

- The Need for FEC: As network speeds increase (especially 100GbE and above), data errors become more likely. To ensure data integrity, many high-speed transceivers use Forward Error Correction (FEC).

- How it Works: FEC adds extra data bits. These bits help the receiver detect and correct errors without re-transmitting data.

- The Cost: FEC processing adds significant latency. It can range from tens to hundreds of nanoseconds per transceiver.

- Trade-off: FEC ensures reliability. But it increases latency. For applications where every nanosecond matters, some specialized transceivers allow FEC to be disabled. However, this increases the risk of uncorrected errors. PHILISUN offers modules with configurable FEC for specific use cases.

3. SerDes (Serializer/Deserializer):

- Data often moves in parallel within chips. But it needs to be serialized (converted to a single stream) to travel over a cable. Then it is deserialized at the other end.

- This SerDes process adds a small, but measurable, amount of latency in transceivers.

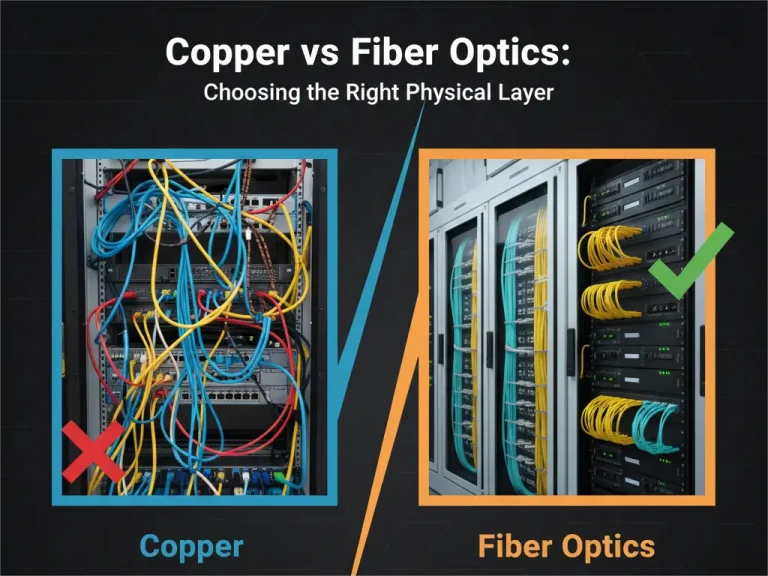

Fiber vs. DAC (Direct Attach Copper): Short Distance Latency

For very short distances within a single rack, Direct Attach Copper (DAC) cables are sometimes considered for low latency.

- DAC’s Advantage: DAC cables transmit data electrically. They do not have the O-E-O conversion delay of optical transceivers.

- DAC Latency: For links under 7 meters, DAC cables can sometimes offer slightly lower latency than a fiber link with two transceivers. This is because they avoid the conversion steps.

- Fiber’s Win for Longer Distances: Beyond short rack links, fiber quickly becomes superior for latency. The electrical resistance and signal degradation of copper introduce significant delays over longer distances. Fiber’s low propagation delay becomes the dominant factor.

- Strategic Choice: For critical, ultra-low latency links within the same rack (e.g., between a server and a Top-of-Rack switch), DAC might be considered. For any link between racks or across longer distances, fiber is the clear winner for minimal latency. PHILISUN offers both DAC and optical solutions to meet specific latency needs.

Reducing Latency with MPO Architectures and Optimized Design

Beyond component choices, network design itself plays a huge role in minimizing latency.

1. Shortest Path Routing:

- Design your network topology for the shortest possible physical paths.

- A well-planned spine-leaf architecture in a data center reduces the number of “hops” data has to take. Fewer hops mean less latency.

2. Removing Intermediate Patch Panels and Splices:

- Every connection point (patch panel, splice tray) adds a tiny amount of physical length and potential signal degradation.

- For ultra-low latency, directly connecting equipment with PHILISUN MPO Trunk Cables can minimize these intermediate points. It shaves off nanoseconds.

- MPO Breakout Cables: Using PHILISUN MPO to LC Breakout Cables can directly connect a high-speed QSFP port to multiple SFP+ ports. This simplifies cabling and can reduce latency compared to multiple intermediate devices.

3. Choosing the Right Fiber Type:

- Multimode vs. Single-mode: While the refractive index difference is minor, the actual physical length constraints often dictate the choice. For short runs, multimode (OM4) is sufficient. For long runs, single-mode is mandatory. The core principle is always the shortest path.

- Bend-Insensitive Fiber: Modern bend-insensitive fiber (e.g., PHILISUN Bend-Insensitive Fiber Patch Cables) can reduce signal loss when cables are bent. This ensures signal integrity. It prevents latency-increasing retransmissions.

4. Optimized Network Devices:

- High-performance switches and routers are designed for low latency. They have faster internal processing. This reduces their contribution to the overall delay.

FAQ: Fiber Latency

Q: Is Single-mode fiber faster than Multimode fiber in terms of latency?

A: Technically, single-mode fiber can have a slightly lower refractive index, meaning light travels marginally faster. However, for typical data center distances, the difference in propagation delay between OM4 multimode and OS2 single-mode fiber is negligible. The biggest factor is the total physical length of the cable. Choose the fiber type that allows the shortest, most reliable path for your distance.

Q: Does cable length really affect ping times?

A: Yes, absolutely. Ping time is a direct measure of round-trip latency. As we saw, light travels about 5 nanoseconds per meter of fiber. So, 1 kilometer of fiber adds about 5 microseconds (0.005 milliseconds) of one-way delay, or 10 microseconds (0.01 milliseconds) to a ping. For very long distances, say 200 kilometers, this adds about 1 millisecond to the ping time (round trip). This is very significant for HFT.

Q: Which PHILISUN transceiver is best for low latency?

A: For ultra-low latency needs, look for modules that operate without FEC when possible, or those with very low inherent FEC latency. For short distances, DAC cables can also be an option. Always consult the specific PHILISUN transceiver data sheet for detailed latency specifications. Our experts can help you select the optimal module for your low-latency requirements.

Conclusion: Latency is a Critical Design Parameter

Latency is a fundamental property of any network. It is not just a secondary metric. For modern, high-demand applications, it can be the most critical factor. Understanding the physics of light, the delays introduced by hardware (especially FEC), and the impact of network design is essential.

Minimizing latency means intelligent choices: choosing the shortest physical paths, selecting optimized transceivers, and careful network architecture.

Don’t let hidden delays slow down your mission-critical applications. Design for speed, not just bandwidth. Contact PHILISUN Experts today to discuss low-latency optical solutions tailored for your high-performance computing, AI clusters, or financial trading environments.