NDR InfiniBand: Validated Connectivity for NVIDIA Quantum-2 HPC

In the era of generative AI, large-language models (LLMs), and exascale scientific research, the insatiable demand for data and computational power has pushed traditional networking to its limits. High-Performance Computing (HPC) and AI clusters are no longer just about processing speed; they are fundamentally defined by the network’s ability to move massive datasets with ultra-low latency.

At the forefront of this revolution is the NVIDIA Quantum-2 InfiniBand platform, the gold standard for building high-bandwidth, low-latency fabrics. But the true power of a platform like the NVIDIA QM9790 switch isn’t unlocked by the switch alone. It is enabled by a sophisticated, interdependent ecosystem of firmware, host channel adapters (HCAs), and a high-precision physical layer of cables and transceivers.

A critical, often-overlooked component in this stack is the firmware. The recent NVIDIA Quantum-2 firmware release, version 31.2012.2200 LTS (Long-Term Support), serves as the digital heartbeat of this ecosystem. This article provides a technical analysis of why this firmware is pivotal for HPC environments and explores the non-negotiable importance of the hardware compatibility list it governs.

A Technical Analysis for HPC: Why Firmware v31.2012.2200 LTS Matters

The analysis of the documentation before the product selection focuses on the core value proposition of this firmware for HPC. This isn’t just a routine update; it’s a foundational release for production-grade supercomputing.

1. The Significance of “LTS” (Long-Term Support) in HPC

For an enterprise, a firmware update might be a quarterly task. For an HPC or AI cluster running a single, multi-million dollar simulation or training model for weeks, an unplanned update or instability is catastrophic.

The “LTS” (Long-Term Support) designation is the most critical feature for these environments. It signifies a promise of stability, predictability, and long-term security patching. Cluster administrators can build and deploy on this version, confident that it is a hardened, production-ready foundation. It eliminates the “rolling update” anxiety, allowing them to focus on compute jobs rather than network maintenance. This firmware version is the bedrock upon which stable, large-scale, mission-critical systems are built.

2. The Power of Validated Interoperability

An HPC cluster is not a homogeneous block. It’s a complex, heterogeneous fabric of new and existing hardware. The “Firmware Interoperability” section of the NVIDIA documentation for v31.2012.2200 LTS is arguably its most important. This v31.2012.2200 LTS firmware has been explicitly validated to work seamlessly with a range of hardware, including:

- NVIDIA Quantum-2 Switches (e.g., QM9790)

- Older NVIDIA Quantum Switches

- ConnectX-7 HCAs (Host Channel Adapters)

- ConnectX-6 HCAs

This validation is the technical key to building and scaling robust clusters. It ensures that an organization can integrate new Quantum-2 NDR (400Gb/s) switches into an existing fabric with ConnectX-6 adapters, or build a new cluster with ConnectX-7 adapters, knowing the entire software and hardware stack is certified to perform. This firmware acts as the universal translator, ensuring all components speak the same high-performance language without errors.

3. Unlocking Advanced Management and NDR Speeds

This firmware “complements the NVIDIA Quantum switch with a set of advanced features, allowing easy and remote management of the switch.” In a 1,000-node cluster, “easy and remote management” is a massive understatement. This enables the sophisticated telemetry, network-wide monitoring, and in-network computing capabilities (like SHARP™) that are essential for optimizing MPI-based workloads and squeezing every last drop of performance from the fabric. It is the software that unlocks the full 400Gb/s InfiniBand speeds the Quantum-2 platform promises, ensuring the hardware’s potential is fully realized.

The “Product Selection” Challenge: Why Hardware Compatibility is Non-Negotiable

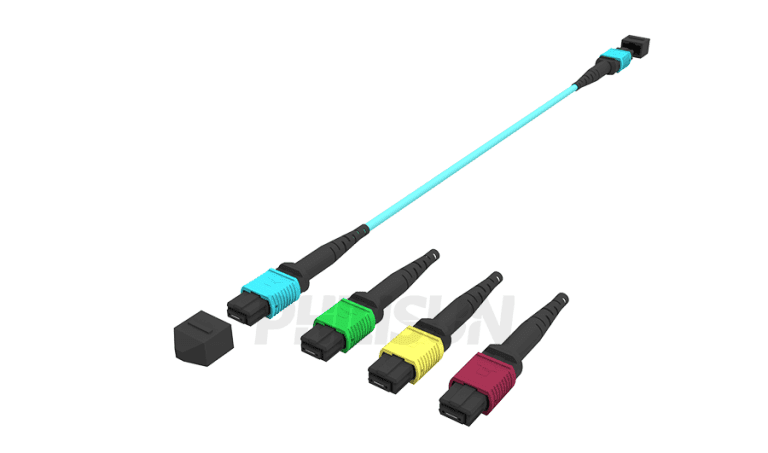

Moving from the firmware, the NVIDIA documentation immediately presents vast tables of “Firmware Compatible Products”—specifically, cables and transceivers. This is followed by a stark warning: “NVIDIA does not support InfiniBand cables or modules not qualified or approved by NVIDIA.”

In the HPC world, this is not a sales tactic; it is a critical engineering directive. Here’s why:

- Signal Integrity at 400Gb/s: At NDR speeds (400Gb/s, using 100Gb/s per lane PAM4 signaling), the physical tolerances are microscopic. A miniscule imperfection in a DAC (Direct Attach Copper) cable’s shielding or an optical transceiver’s laser alignment can lead to a high Bit Error Rate (BER). This results in constant packet retransmissions, which destroys the low-latency advantage of InfiniBand.

- The Latency Killer: HPC applications, particularly those using RDMA (Remote Direct Memory Access), are measured in nanoseconds. A non-compliant cable or transceiver can introduce just enough jitter or processing delay to cripple the performance of the entire cluster. The network becomes “congested” not from traffic, but from physical-layer errors.

- System-Wide Instability: A single faulty cable doesn’t just take down one port. It can cause a port to “flap” (rapidly connect and disconnect), forcing the network fabric’s routing engine to constantly recalculate paths. This sends destabilizing ripples across the entire cluster, potentially torpedoing a week-long compute job.

The connectivity matrices in the documentation (Switch-to-Switch, HCA-to-Switch) are a blueprint for success. They are the result of thousands of hours of validation to guarantee that every single component, from the HCA to the transceiver to the cable to the switch, will perform as a single, cohesive unit.

PHILISUN: Your Partner for a Validated, High-Performance Fabric

This is precisely where PHILISUN bridges the gap between HPC’s potential and its physical reality. Building a state-of-the-art InfiniBand network is not just about buying the right switch; it’s about building a fully validated, end-to-end physical infrastructure.

As our own HPC solutions state, PHILISUN “fully supports NVIDIA® InfiniBand networks” and is “mass-producing 400G-1.6T high-performance optical modules.” We don’t just sell components; we provide the engineered and validated connectivity that high-performance platforms demand.

Our commitment to this ecosystem is absolute. We understand that a transceiver or Active Optical Cable (AOC) is not a commodity—it is a mission-critical component. That is why “our products undergo full-link optimization from chips to firmware.” This rigorous process ensures that every PHILISUN optical transceiver, DAC, and AOC meets and exceeds the stringent requirements for NVIDIA’s ecosystem.

When you select PHILISUN as your connectivity partner, you are not just buying a product. You are investing in a solution that is pre-validated to work seamlessly with platforms like the NVIDIA Quantum-2 running firmware v31.2012.2200 LTS. You are eliminating the risk of physical-layer instability and ensuring your cluster performs at its peak, from the first day and for its entire lifecycle.

Conclusion: Building the Future of AI, One Validated Connection at a Time

Building a next-generation HPC or AI cluster is a holistic endeavor. It begins with a powerful platform like the NVIDIA Quantum-2, is sustained by stable and advanced firmware like v31.2012.2200 LTS, and is ultimately only as strong as its weakest physical link.

By mandating a strict list of compatible hardware, NVIDIA ensures that its platform can deliver on its promise of world-record performance. The choice of connectivity provider becomes one of the most critical decisions in the entire cluster design.

Don’t let your investment in cutting-edge compute be bottlenecked by an unvalidated physical layer. Partner with PHILISUN to build a robust, reliable, and high-performance network fabric that is ready for the future of AI and HPC.